Project page on Amazon Studio

The Amazon Studios experience continues. As of last night there 395 projects, and today before 5pm–less than 48 hours after launch–there were over 500. It gives you an idea of how many people see themselves as potential filmmakers and either don’t read or understand the terms of the contract, or are desperate enough for a chance that there are willing to give away an 18 month option to their work.

Yesterday a new project appeared, and within five hours accrued over 2000 “downloads” thrusting it into the limelight of the first page and various sidebars populated with the “most popular” projects. I surmise this is someone with a huge number of friends… or a bot. (There seems to be no limit to the amount of times you can download from the same IP address.) My sense is that at this point, several entrants have experienced similar seeming contests and are using the usual strategy of recruiting as many “reviews,” as possible, as well as encouraging positive comments.

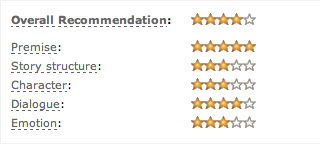

Yesterday night also marked first trickle of reviews. Like Amazon product reviews, reader reviews at Amazon Studio have a star rating system. A reviewer chooses between one and five stars in five categories (premise, structure, characters, dialogue, emotion) and an average of these is displayed as a “recommendation.” As of 5pm, most reviews are positive, and, while some are certainly random, I have noticed (since I know a few other players) that five-star reviews often emerge from a writer’s hometown, from people who share a surname with the writer, etc. Please don’t think I note this as a criticism of these writers–believe me, I’m not going to turn down positive reviews from anyone. (Seriously, leave me one now, I’ll take it.) But I think it is worthy of noting as part understanding if and how the site is working thus far as a method of vetting creative projects. My current feeling is that, moving into only the second full day, politicking is still a primary means of moving the players around on the board.

So the question is, at some point will a critical mass of non-partisan commentators emerge that will act as an equalizer, and at that point, will the site perform its function?

I don’t have the answer to this, but I do have one data point–in the form of another exciting development from last night. I received my first review! And it was not from my hometown or from a family member. It was from a total stranger in Quebec (Martin). Nor did he give me a five star review–far from it.

My reviewer’s spelling and punctuation leave something to be desired, and I don’t agree with much he said, BUT, I don’t see him championing any other script, and he hasn’t written a script himself, so my main point here is that I believe he actually wrote this, out of the goodness of his heart, with no agenda.

So… is this the guy the site needs more of? He is a passionate reader and willing to give his opinions, and that seems like one criteria. But is he an example of someone working at the level needed to vet creative product? How do we know whether to trust his opinion or not?

Within the Amazon community proper, a reviewer gets a good reputation (as I understand it) by reviewing products. Other people, who have either tried the product themselves or decide whether to try the product based upon the reviews, and rate a review in terms of helpfulness. Amazon Studio has set up something similar here, except that, as far as I can tell, the only person to rate a reader’s review is the writer himself. That could be problematic, as writers are known to be incredibly subjective about their own work.

“Hey, this Fred guy doesn’t like my writing (which is GREAT, by the way) so I don’t like Fred! I’m going to give him a low rating.” Or, “Yeah–my mom’s friend is absolutely right. This is better than Star Wars! Five stars!”

How to solve for this? My first thought is that other readers should ALSO be able to rate a person’s review. Maybe that person should be required to also write a review of the project in question to show they have read it and can judge–or maybe not. In actual development I’m sure execs feel they can determine good and helpful coverage without having read the script themselves–that’s why they want coverage! Right now the reviews are ostensibly “notes” for the writer, but they are also functioning as “recommendations” for other people as to whether they should bother to read or not…

So for both writers and our crowd-source development team, what constitutes a “helpful” review? Should it summarize the story or themes? Should it try to outline the structure? Should it speculate on whether the reader friends would go see it in a movie theater? I’m not really sure, but I bet some folks would have opinions.

Some possible advantages of review reviews and parameters for reviews is that they might:

- Encourage honesty. If you are going to be a repeat reviewer, you know you’ll be held accountable for your reviews

- Start the process of sifting through and elevating the trust level in the stronger critics, building legitimacy.

- Grow the “intelligence of the crowd” by starting to highlight competent reviewers, thus modeling good “critical thinking” among the “newbies” in the crowd. (This last works alongside with another suggestion I submitted to Amazon, about maybe bringing in some professional “guest reviewers” for the same reason–not to take over, but to educate the crowd and model some critique-writing behavior.

PS. I know I’m discussing a lot of mechanics–the “how” of it. This doesn’t mean I haven’t also been thinking about the “why” and “should” questions that I think need to be asked–it’s just that right now, the mechanics are in flux, and changing quickly and I’m interested in recording that. Then I will definitely have some thoughts about what constitutes art, etc. In the meantime, John August posted today, starting to cover some of those bases.

(Originally published on barrington99.blogspot.com.)

Commentary

Got something to add?